来源:专知

本文约1000字,建议阅读5分钟

在这本书中,我们提出了一个基本的平衡,以及实践知识,以充分赋能你继续前进。

深度学习领域取得了指数级的发展,像BERT、GPT-3、ResNet等ML模型的足迹也在不断扩大。虽然它们工作得很好,但在生产中训练和部署这些大型(且不断增长的)模型是昂贵的。你可能想在智能手机上部署你的面部滤镜模型,让你的用户在他们的自拍上添加一个小狗滤镜。但它可能太大或太慢,或者您可能想提高基于云的垃圾邮件检测模型的质量,但又不想花钱购买更大的云VM来承载更精确但更大的模型。如果您的模型没有足够的标记数据,或者不能手动调优您的模型,该怎么办? 所有这些都是令人生畏的!

如果您可以使您的模型更高效: 使用更少的资源(模型大小、延迟、训练时间、数据、人工参与),并提供更好的质量(准确性、精确度、召回等),会怎么样呢?这听起来太棒了! 但如何?

这本书将通过在谷歌研究,Facebook人工智能研究(FAIR),和其他著名的人工智能实验室使用算法和技术的研究人员和工程师训练和部署他们的模型,设备从大型服务器端机器到微型微控制器。在这本书中,我们提出了一个基本的平衡,以及实践知识,以充分赋能你继续前进,并优化你的模型训练和部署工作流,这样你的模型表现和以前一样好或更好,与一小部分资源。我们还将深入介绍流行的模型、基础设施和硬件,以及具有挑战性的项目,以测试您的技能。

https://efficientdlbook.com/

目录内容:

Part I: 高效深度学习导论 Introduction to Efficient Deep Learning

导论 Introduction

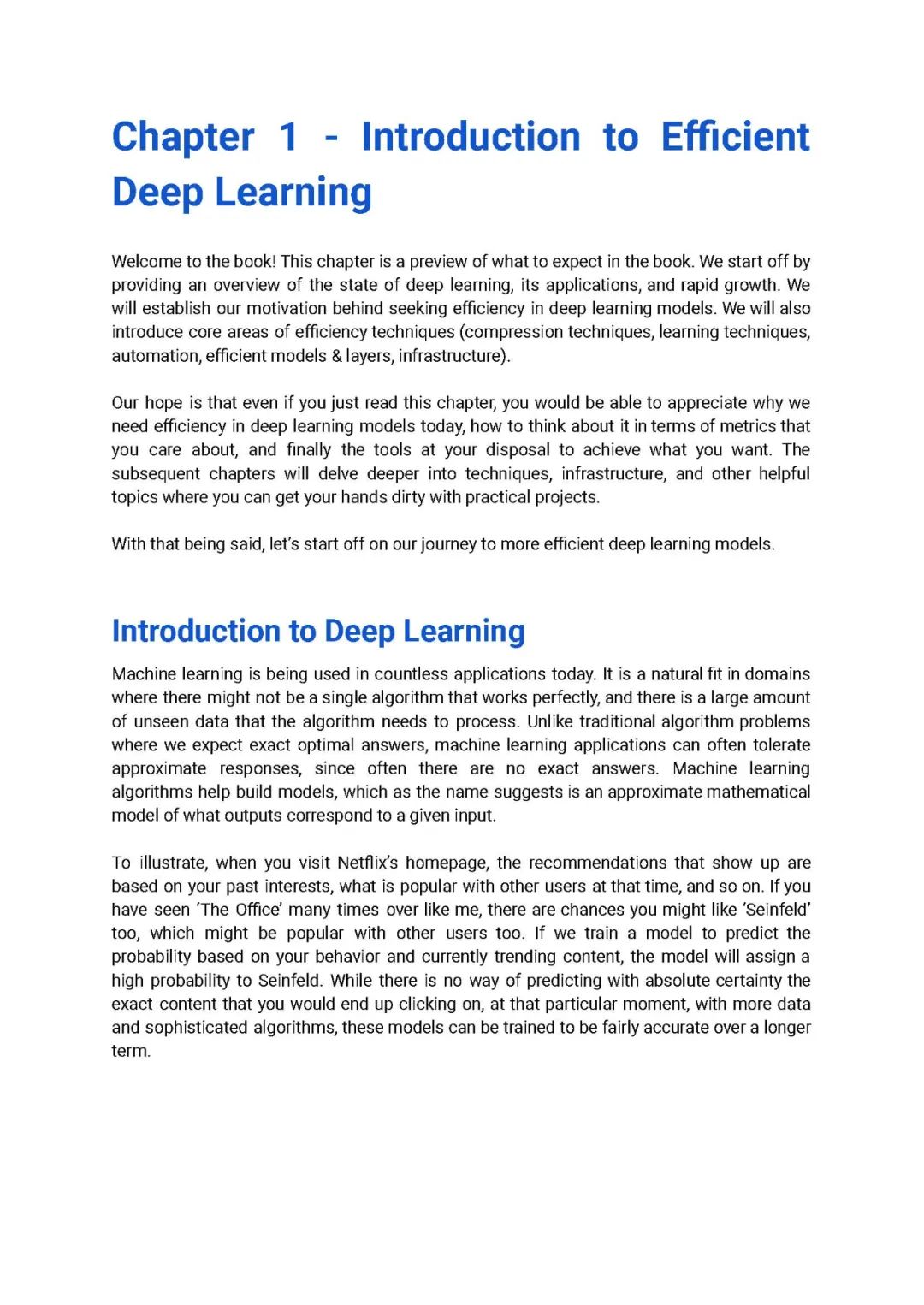

Introduction to Deep Learning

Efficient Deep Learning

Mental Model of Efficient Deep Learning

Summary

Part II: Effciency Techniques

压缩技术导论 Introduction to Compression Techniques

An Overview of Compression

Quantization

Exercises: Compressing images from the Mars Rover

Project: Quantizing a Deep Learning Model

Summary

学习技术导论 Introduction to Learning Techniques

Project: Increasing the accuracy of an speech identification model with Distillation.

Project: Increasing the accuracy of an image classification model with Data Augmentation.

Project: Increasing the accuracy of a text classification model with Data Augmentation.

Learning Techniques and Efficiency

Data Augmentation

Distillation

Summary

高效架构 Efficient Architectures

Project: Project: Snapchat-Like Filters for Pets

Project: News Classification Using RNN and Attention Models

Project: Using pre-trained embeddings to improve accuracy of a NLP task.

Embeddings for Smaller and Faster Models

Learn Long-Term Dependencies Using Attention

Efficient On-Device Convolutions

Summary

高级压缩技术 Advanced Compression Techniques

Exercise: Using clustering to compress a 1-D tensor.

Exercise: Mars Rover beckons again! Can we do better with clustering?

Exercise: Simulating clustering on a dummy dense fully-connected layer

Project: Using Clustering to compress a deep learning model

Exercise: Sparsity improves compression

Project: Lightweight model for pet filters application

Model Compression Using Sparsity

Weight Sharing using Clustering

Summary

高级学习技术 Advanced Learning Techniques

Contrastive Learning

Unsupervised Pre-Training

Project: Learning to classify with 10% labels.

Curriculum Learning

自动化 Automation

Project: Layer-wise Sparsity to achieve a pareto optimal model.

Project: Searching over model architectures for boosting model accuracy.

Project: Multi-objective tuning to get a smaller and more accurate model.

Hyper-Parameter Tuning

AutoML

Compression Search

Part 3 - Infrastructure

软件基础 Software Infrastructure

PyTorch Ecosystem

iOS Ecosystem

Cloud Ecosystems

硬件基础 Hardware infrastructure

GPUs

Jetson

TPU

M1 / A4/5?

Microcontrollers

Part 3 - Applied Deep Dives

Deep-Dives: Tensorflow Platforms

Project: Training BERT efficiently with TPUs.

Project: Face recognition on the web with TensorFlow.JS.

Project: Speech detection on a microcontroller with TFMicro.

Project: Benchmarking a tiny on-device model with TFLite.

Mobile

Microcontrollers

Web

Google Tensor Processing Unit (TPU)

Summary

Deep-Dives: Efficient Models

Project: Efficient speech detection models.

Project: Comparing efficient mobile models on Mobile.

Project: Training efficient BERT models.

BERT

MobileNet

EfficientNet architectures

Speech Detection

| 留言与评论(共有 0 条评论) “” |